| Assessing students’ writing adds up to one big, expensive technical headache for researchers. |

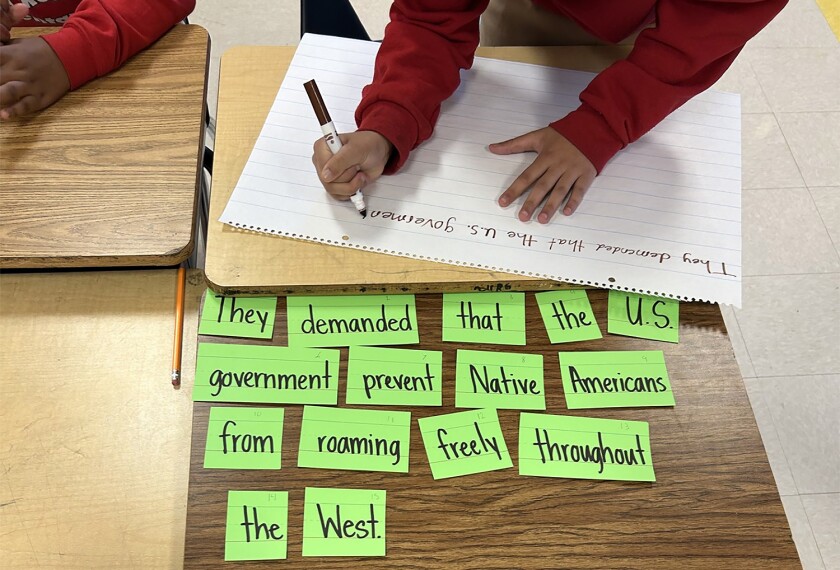

Daniel Ferri thought he was giving his 6th graders helpful tips for taking the Illinois state exam when he tacked Post-it notes on their test booklets to remind them to “darken circles.” But one independent thinker in the group, taking the teacher’s note as a prompt for the essay part of the test, proceeded to write five paragraphs on the subject of “dancin’ circles.”

“Well,” the student’s essay began, “I never thought much about dancin’ circles before, but if that’s what you want me to write about, here goes ...”

That anecdote from two years ago, which Ferri, who teaches in a Lombard, Ill., middle school, recounted in a commentary broadcast in March on National Public Radio, points up one of the inherent difficulties of using large-scale testing to assess students’ writing abilities. Though presumably few students would confuse a handwritten note with the official essay directions, writing performance on such tests is notoriously variable.

Many students, for example, write better in one genre than in another, or produce finer prose when the topic is more familiar or more interesting to them. And judgments about what makes good writing are often more subjective than testing experts would like them to be.

It all adds up to one big, expensive technical headache for the researchers who develop and monitor assessment programs.

“Writing by its very nature has reliability problems,” says H.D. Hoover, an author of the Iowa Tests of Basic Skills, which have been measuring student writing samples since 1991. “You see an awful lot of variation that occurs that is unexplainable.”

The same sorts of problems came to light recently in the National Assessment of Educational Progress, the congressionally mandated testing program used to take a national pulse on student achievement. (“NAEP Drops Long-Term Writing Data,” March 15, 2000.)

Concerned that long-term trend results from the program’s writing tests were untrustworthy, the national board that oversees the assessment voted two months ago to scrap the questionable data pending further analysis. Now, when researchers look to the NAEP site on the World Wide Web to see how well students wrote in 1994 and 1996, they will come up empty-handed. That part of American students’ writing history has been removed from the archives.

Results from the main NAEP writing test, on the other hand, remain in place. The main test, on which state-by-state results are based, is much bigger and changes according to changing NAEP standards. The trend test, which is much smaller, consists of the same questions year after year.

Because it tracks progress over a long period of time, the national exam’s task is more complicated than that of most assessments used by states and school districts. Even so, NAEP’s problems should raise at least a small cautionary flag for testing programs nationwide, says Gary W. Phillips, the acting commissioner of the National Center for Education Statistics. “All testing programs that use performance assessments with a very small number of items are going to have difficulty monitoring trends,” he says. “We were able to detect these problems over 14 years. Most testing programs are not around that long.’'

A Costly Process

The resurgence of interest in what is called direct assessment of student writing came amid the broader movement toward performance assessment that began in the 1970s and early 1980s. Convinced that traditional fill-in-the-bubble exams were not tapping into the full range of students’ knowledge and abilities, educators, researchers, and policymakers began looking for ways to measure what students could actually do with what they knew.

States saw writing requirements as a way to signal to schools what was important in classroom instruction. |

“If you want to learn more about how a student can write, you will better learn that by asking a student to write,” says Daniel Koretz, a senior social scientist for the RAND Corp. and a professor of research, measurement, and evaluation at Boston College.

States also saw writing requirements and other kinds of performance tasks as a way to signal to schools what was important in classroom instruction. If teachers were going to “teach to the test” anyway, the reasoning went, then at least they would be teaching needed skills, such as writing.

Now, 35 states ask students to produce real writing samples as part of their assessment programs, according to the Council of Chief State School Officers, based in Washington. And nearly all major test publishers incorporate writing prompts in their off-the-shelf tests, usually in combination with multiple-choice questions designed to assess a student’s grasp of grammar, spelling, and vocabulary.

But along the way, testing experts have learned, assessing writing has proved more expensive, time-consuming, and technically frustrating than many had hoped.

One of the biggest problems, as exemplified by the Illinois teacher’s example, is the unpredictability of student performance. Some researchers contend, for example, that students write better when they are more familiar with the subject matter.

“You have to write what you know about,” says Eva L. Baker, a co-director of the Center for Research on Evaluation, Standards, and Student Testing, a federally funded research center based at the University of California, Los Angeles.

Some teachers in New York state were troubled last year when their students were assigned as part of the state’s graduation exams to write an essay persuading school board officials to provide Suzuki violin lessons in school. Even though the students were given background information on the subject, the teachers wondered if the students, many of whom came from low-income households, would think Suzuki was a car manufacturer, according to a Harvard University researcher who was studying the schools.

For the most part, however, test developers have become masters at asking students to write on topics with which everyone is likely to be familiar, such as school uniforms or summer vacations. The trouble with such bland questions is that they may fail to motivate students. Or, the questions leave them at a loss for something interesting to say.

The Educational Testing Service, which incorporated a writing test into its Graduate Record Examinations battery for the first time last fall, is trying an alternative approach to address the problem of subject-matter familiarity. It publishes the entire pool of writing “prompts” that students might encounter on the exam. On the actual test, students will be required to write on only two topics, though ambitious students would be free to research all 80 prompts if they wanted to.

“The idea is get away from the on-demand nature of things so you’re not totally surprised by what you get in the exam,” says Donald E. Powers, a research scientist at the Princeton, N.J.-based nonprofit testing company.

‘Magic’ Number?

Other countries, such as Australia, use a different tactic: Provide students with a collage of information from which they must craft an essay. “The U.S. is almost unique in asking questions at this massively general level,” Baker says.

Variability also occurs when students are asked to write in more than one genre. A skilled narrative writer, for example, might falter when asked to produce an expository essay, and vice versa.

Given all that volatility, test-makers must use an adequate number of writing prompts to get a fair reading of students’ writing proficiency. “If you have a multiple-choice math test with 45 or 50 questions, how you do on question 13 or 14 doesn’t matter that much because it all averages out,” says Koretz. But with writing, he adds, a student’s final score may well be riding on one or two questions.

‘More research needs to be done on ways tests are put together, what they can tell you over time, and how reliable they will be over time.’ Pasquale J. DeVito, |

Having too few writing prompts was the primary problem with NAEP’s long-term trend results, Phillips of the National Center for Education Statistics says. Students were asked to complete five or six writing tasks on that test. In comparison, the new version of the main NAEP test, given for the first time in 1998, uses 20 prompts at each grade level.

Though long suspected, the problem with the long-term test did not come to light until this year, when researchers were correcting an error in the formula used to scale the 1999 test. Some added quality-control checks made at the same time revealed that the test results varied according to the method of analysis the researchers used.

“When that was reported to me, I decided we can’t really report on an assessment that depends this much on which type of analysis we use,” Phillips said. The statistics agency, an arm of the U.S. Department of Education, is now re- examining last year’s data to determine whether more sophisticated scoring procedures could salvage the results.

Is there a “magic” number of prompts to use on a standardized writing test? That depends on many different factors, most test experts say.

“You probably need between six and 10 tasks to get a reliable measure of performance,” says Richard J. Shavelson, the dean of the school of education at Stanford University. His conclusion comes from a review of a wide variety of tasks used in performance assessments.

Many states use far fewer items, Shavelson points out, and universities often decide which students to place in a remedial-writing course on the basis of a single writing sample.

Reliability Concerns

The problem is that each writing task might take students 30 to 40 minutes to complete. Adding too many such items could make a test too cumbersome to administer, especially now that pockets of parents and educators around the country complain that, in this era of school accountability, testing is taking up too much valuable instructional time.

What’s more, the longer the essays take to write, the longer it takes to score them. Most have to be read by two or more graders. The nation’s biggest-selling test publisher, CTB/McGraw-Hill of Monterey, Calif., has devised computer technology that allows graders to read and score writing samples without ever handling a piece of paper. The idea is to decrease errors, increase speed, and increase objectivity by displaying the writing samples on a computer screen on which the student is not identified. But in the end, a human being still has to read every essay.

Multiple-choice tests, in contrast, can be machine-scored at up to 5,000 sheets an hour, according to Michael H. Kean, the vice president for public and governmental affairs at CTB/McGraw-Hill. As a result, the company’s assessments that use writing samples can cost from two to 10 times as much per student.

Researchers have recently developed technology that allows a computer to actually grade student essays. The machines use proxies for good writing, such as the length of an essay. But many testing experts still mistrust that technology, despite studies showing that the computer graders may be just as reliable as human scorers.

In the early 1990s, the Iowa Testing Programs and its publisher, Riverside Publishing Co., spent four years and $370,000 creating the Iowa Writing Assessment, the program’s first direct assessment of writing. The test, which takes 40 minutes to administer, sells for $5 to $9 a student, depending on the level of scoring detail the customer wants.

But there is more than one way to look at reliability, and the degree of reliability required in a test varies according to the purpose of the test. |

By contrast, Hoover notes in a 1995 paper on the subject, the complete five- hour battery of the Iowa Tests of Basic Skills costs $5 to administer and yields scores in 13 areas, including reading comprehension, science, and math problem-solving.

“We’re interested in providing writing assessments, but the cost associated with developing the writing assessment was such that ... we won’t get back the income to pay for this in my lifetime,” Hoover said in a recent interview. “The cost per unit of information is just very high, and there’s no way to make it much cheaper.”

What’s more, he adds, most performance-based tasks, including writing, have a comparatively low reliability coefficient—a number between 0 and 1 that predicts the likelihood that a given student will get the same score on the same test on a different day.

Those tasks have a reliability coefficient of .35 or .4—roughly half as high as that for the Iowa Test of Reading Comprehension. To understand what that low coefficient means, Hoover suggests thinking of a test in which a quarter of test-takers fall into each of four achievement levels: advanced, proficient, basic, and below basic. If 400 students took that test, then 100 students would land in each of the four categories.

Then give the test the next day to the same group of students using a different prompt, but one that requires writing in the same genre. With a reliability coefficient of .4, Hoover says, only 43 of the students ranked as advanced the previous day will land in the same category again, another 28 will have been bumped down to proficient, and 19 will have moved to below basic. The remaining 10 will get a below-basic ranking.

A ‘Research Agenda’

That’s one reason why most testing experts advise against using a single test of any kindto make “high stakes” decisions about a student’s academic future.

But there is more than one way to look at reliability, and the degree of reliability required in a test varies according to the purpose of the test.

While NAEP is not a high- stakes test for students, its mission of tracking the nation’s progress over the long term puts an added technical burden on it. In any assessment that aims to gather trend data, test-makers either have to use the same items year after year or come up with new ones that cover the same ground.

“The essential issue is that no two prompts are equally difficult,” says Paul L. Williams, a senior managing scientist for the American Institutes for Research, a private organization based in Washington. “Over time,” he says, “the achievement of students gets confounded with the difficulty of the prompts.”

And it’s easier to come up with a comparably difficult item in math, for example, than it is in writing, where qualities such as creativity also come into play.

Keeping long- term data is less of a concern for states, most of which often change their testing programs to meet political demands, says Pasquale J. DeVito, Rhode Island’s assessment director. In his own state, the form of the tests, the grades tested, and the way the results are reported have all changed over the past 20 years. Now the state uses its own assessment, plus a package of assessments developed by the New Standards project, a consortium of states that have pooled resources to create testing programs that use more performance- based tasks.

“More research needs to be done on ways tests are put together, what they can tell you over time, and how reliable they will be over time,” DeVito says. “This whole area of performance assessments is a research agenda for the future.”

But teachers such as Mr. Ferri worry that standardized measurement of student writing will always come at too high an instructional price. In his own district, he says, students are taught to conform to a standard number of paragraphs, to have introductory and concluding paragraphs, and to use approved transition words, among other items on test scorers’ checklists. “That has nothing to do with the joy of writing, of understanding language, or of wanting to write,” he says, “and the kids hate it.”

The Research section is underwritten by a grant from the Spencer Foundation.