One of my former principals developed a strategy for increasing attendance at family/student/teacher conferences.

He counted the number of families who had attended during his first year as principal and then set a goal for the next one. He then figured that the amount ought to increase by the same amount the next time, and so on and so forth.

Each time we had conferences his goal was 25 more families than the previous conference’s goal. We started out with something like 100 families so the next goal was 125, then 150...you get the idea.

The plan worked great at first and we hit our target for a few conferences in a row. But then

we missed the goal and we missed it again and we missed it again. What was going on?

Eventually, we realized what should have been obvious to anyone who passed 8th grade math: the phenomenon we were measuring was not linear.

At first, we were able to accomplish a 25 family increase with relatively small inputs such as updating our website and calling/emailing families.

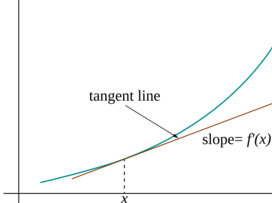

Yet this success also decreased the pool of non-attending families, and the ones who were left were often not attending for some significant reasons—family crisis, work or other economic pressures, extreme alienation—which we weren’t addressing effectively. The same amount of “input” would no longer achieve the desired change in “output” (a better mathematical model for this is type of phenomenon is logarithmic, note how y changes less as x gets larger).

Many thought-leaders and policymakers seem to make the same mistake my principal did when they look at test scores. Learning is not linear (think about that time you worked and worked without success on one part of a project only to have an “aha” moment and fly through the rest), but you would not know this from reading the education news opinion pages after the release of the most recent National Assessment of Educational Progress (NAEP) scores. We should not have assumed the scores would continue to grow at previous rates, nor can we assume that they will fall at the same rate they did this year.

Folks from across the edu-political spectrum have made assumptions about the predictive nature of the NAEP score drop, and used it as evidence for their position. If you’re pro-Common Core then NAEP shows that higher standards are causing students “to adapt” and portend future success. If you’re against testing then NAEP shows the horrible impact of over-testing.

What NAEP data actually show is a snapshot that’s useful for seeing broad trends but not useful in identifying causal relationships. As Stephen Sawchuck put it in his “When Bad Things Happen to Good NAEP Data:

“The exam’s technical properties make it difficult to use NAEP data to prove cause-and-effect claims about specific policies or instructional interventions.”

We should not ignore the drop in NAEP scores, nor should we use it to suggest that our educational sky is falling. We should be more like the New York Mets’s management team.

Yes, I am a Mets fan, but their example is informative even if you don’t root for them. After hiring a new manager (Terry Collins) in 2010, the team experienced five straight losing seasons, never finishing close to the top of their division. But they had a long term plan and they worked that plan. They didn’t ignore negative outcomes but they didn’t let those outcomes undermine their plan either. This strategy took them to a division championship this year and the future looks bright.

In calculus, local linearity refers to the fact that if you zoom in enough, any curve looks like a line. Of course, a line also looks like a line. In order to make predictions we need rich data and critical thought about the fundamentals of a situation, not simply outcomes.

The Mets have strong fundamentals: an incredible and young pitching staff and strong veteran leadership. These characteristics of the team allow us to predict success next year.

The fundamentals of our educational system (and more generally the fundamentals of the society behind our educational system) are much more of a mixed bag. I see promising indicators in policies that promote deep learning and teacher leadership. I see worrisome indicators in growing segregation and economic inequality, as well as a trend toward demonizing teachers, our unions, and public schools.

Let’s make fundamentals, not outcomes, the focus of our discussion. Let’s make a long term strategy for change based on proven models of success. Let’s incorporate negative feedback in a way that supports instead of undermines that long term strategy. And—unrelated—Let’s Go Mets!

Photo 1: “Mr Metciti”. Licensed under Public Domain via Wikipedia - https://en.wikipedia.org/wiki/File:Mr_Metciti.jpg#/media/File:Mr_Metciti.jpg

Photo 2: “Tangent-calculus” by derivative work: Pbroks13 (talk)Tangent-calculus.png: Rhythm - Tangent-calculus.png. Licensed under CC BY-SA 3.0 via Commons - https://commons.wikimedia.org/wiki/File:Tangent-calculus.svg#/media/File:Tangent-calculus.svg